In Fall of 2014, I was asked to assist a team of developers in building an internal application for the City so that Geospatial Information Systems (GIS) users could easily publish geospatial data.

In the past, to publish new (or update existing) geospatial data, a GIS SPOC (single point of contact) would have to submit a help desk ticket to the IT department describing need, data source, data destination and schema requirements and then wait for a GIS Analyst to validate the data, ensure it met standards, and perform the work. This process could take up to one week.

The methods I took the team through involved the following:

- User Personas

- User Stories

- Comparative Analysis

- Site Mapping

- Wireframing

- Prototyping

- User Testing

We used an agile method for the project with two week sprints. The entire project lasted about 20 months.

1. User Personas and User Stories

User stories for each persona were created in Trello, and referred back to throughout the design process.

- Data Manager Dan

- A GIS SPOC who will use Geodatapusher to publish geospatial data.

- Administrator Ann

- Ann currently responds to help desk tickets. She will use Geodatapusher to publish data and will manage user accounts.

- Curious Cal

- Cal is a GIS user who may want to view what datasets are being updated in Geodatapusher.

2. Comparative Analysis

We discussed the current process and looked at two existing applications.

- Open DocMan: Open Source Document Management System

- P2S: Project Programming System

3. Sitemapping

Final Sitemap

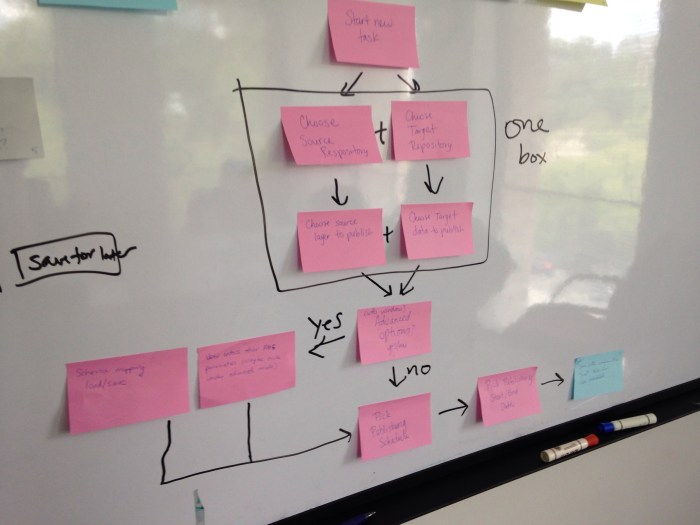

4. Wireframing

After sitemap was completed and reviewed, the team had a session where we started drawing initial wireframes referring back to the sitemap. We went around the room and presented our sketches and started consolidating the different ideas.

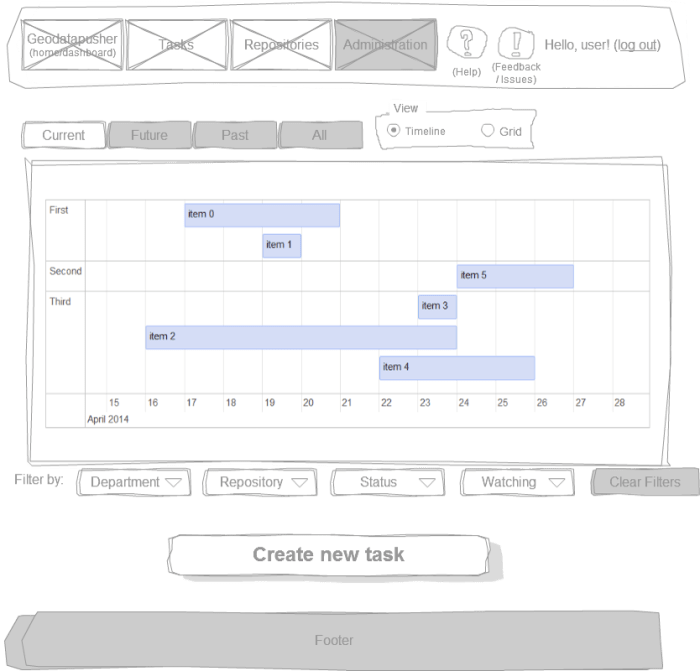

5. Prototyping

Using Balsamiq and Cacoo, we took our sketches and began building lo-fi, and hi-fi prototypes.

6. User Testing

Round 1 of user testing involved both print outs and a hi-fi prototype of the publishing wizard. We tested with a total of five people who matched our personas.

Users were asked to walk through specific scenarios as well as asked questions about labels, look, and feel.

We consolidated responses from testing and integrated those changes as application was built. Round 2 of testing involved testing MVP with additional changes made before going live.

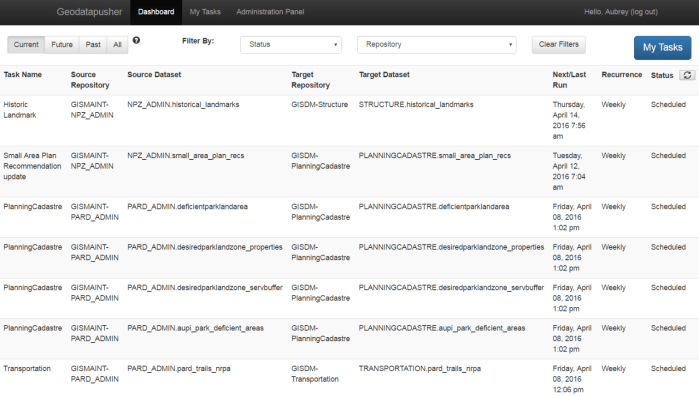

Screenshot of MVP Dashboard

Results: Ongoing feedback is captured via a survey emailed out biannually to users and linked to from the navigation bar.

Current analytics on the site show:

- 46 users (with some shared accounts so actual number is higher)

- 411 tasks performed (112 on a recurring schedule)

- 698 actions performed (228 on a recurring schedule)